[This article was prepared by Zenda Ofir based on a full paper by four authors – Zenda Ofir, Tom Schwandt, Colleen Duggan and Rob McLean. Zenda is an independent evaluation specialist from South Africa and former President of the African Evaluation Association. She is a founding partner of the newly established International Centre for Evaluation and Development (ICED) in Nairobi, which will be fully operational in 2017. See also zendaofir.com.]

The Research Quality Plus (RQ+) Assessment Framework is a recent contribution to the quest for improvements in the assessment of research quality. We designed the framework based on our extensive experience in research evaluation in different parts of the world, which convinced us that a systems perspective was needed to reframe and expand thinking about research quality. We also had a deep belief in the unique and beneficial role that high quality research can, and should play in this challenging era where the Sustainable Development Goals intersect with the Fourth Industrial Revolution as drivers of development strategies.

Learn more about Monitoring and Evaluation of Policy Research:

On Think Tanks School Monitoring and Evaluation courses

On Think Tanks School Webinar on Monitoring and Evaluating Policy Research

The RQ+ approach is of particular relevance for impact-orientated think-tanks – not only for use in external reviews and evaluations, but also for its potential to enable critical reflection and adaptive management towards high quality, impactful research.

From a science-centric to a systems approach

Research quality assessments have been considered the purview of scientists. They are based on scientific values and criteria, and rely on scientific peer review. At times, this peer review is replaced or informed by bibliometrics and scientometrics and, occasionally, reputational studies. In essence, research quality assessment can be considered a closed system.

As we noted in the introduction of RQ+, this ‘science-centric’ approach is coming under increasing scrutiny. On the one hand, scientists are concerned about the validity and reliability of research metrics and the obstacles they pose to boundary-spanning, engaged scholarship. On the other hand, stakeholder demands for urgent solutions to ‘wicked’ problems, and for impact and value for investments are compelling new thinking about the interaction between science and society, and the entanglement of the two.

We therefore based the RQ+ Assessment Framework on the premise that a credible, comprehensive assessment of research quality must consider more than research outputs. RQ+ acknowledges that research efforts are nested in, and influenced by economic, political and socio-cultural circumstances; that research quality has meaning only in context; that scientific merit is an essential yet insufficient condition for judging research quality; and that pathways to research uptake, use, influence and impact are convoluted and unpredictable, often involving multiple linkages and exchanges among researchers, policymakers, practitioners and/or the private sector.

Thus the context(s) in which the research is conducted and the manner in which it is managed matters. So do different perspectives on the relevance, credibility, legitimacy and utility of the research, from both within and outside the scientific community. And although researchers and research managers cannot control research effectiveness, and can therefore not be held accountable for achieving impact, they can be held accountable for positioning their research for use.

The RQ+ Assessment Framework

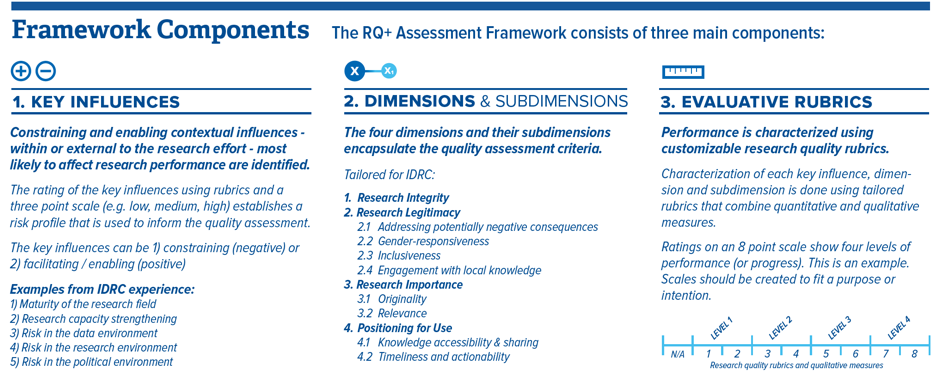

We developed the RQ+ Assessment Framework in response to IDRC’s desire to advance global research evaluation practice while also bringing a degree of standardization and transparency to its own assessment of research quality. After development of the framework and the RQ+ Assessment Instrument that guided the operationalization of the framework, it was applied in a series of IDRC External Reviews conducted by teams of scientific experts from around the world.

We captured the experiences and lessons learned in the publication that introduced the RQ+ approach. While the first tests and applications confirmed the benefits of the RQ+ approach for summative reviews, the reviewers in conjunction with IDRC also identified several challenges. The time-consuming nature of the assessment was the most significant. This is one of the reasons why we regard the RQ+ approach as particularly suitable for management purposes, as discussed below.

The strengths of the RQ+ approach

The RQ+ approach has shown several important strengths:

First, it recognizes the value of context-sensitive, engaged, boundary-spanning scholarship.

Second, it enables consideration of key constraining and enabling influences on the research. This helps to establish the risk profile of the research endeavor, which in turn informs the quality management and/or assessment efforts.

Third, it supports a uniform approach to assessing research quality across different types of research through the establishment of four quality dimensions. Each dimension is defined by a set of sub-dimensions that can be tailored to the specific context in which the research is being assessed. Two of the dimensions – Research Legitimacy, and Positioning for Use – can also be adjusted to suit the type of research, and the program or organizational mandate.

Third, it supports a uniform approach to assessing research quality across different types of research through the establishment of four quality dimensions. Each dimension is defined by a set of sub-dimensions that can be tailored to the specific context in which the research is being assessed. Two of the dimensions – Research Legitimacy, and Positioning for Use – can also be adjusted to suit the type of research, and the program or organizational mandate.

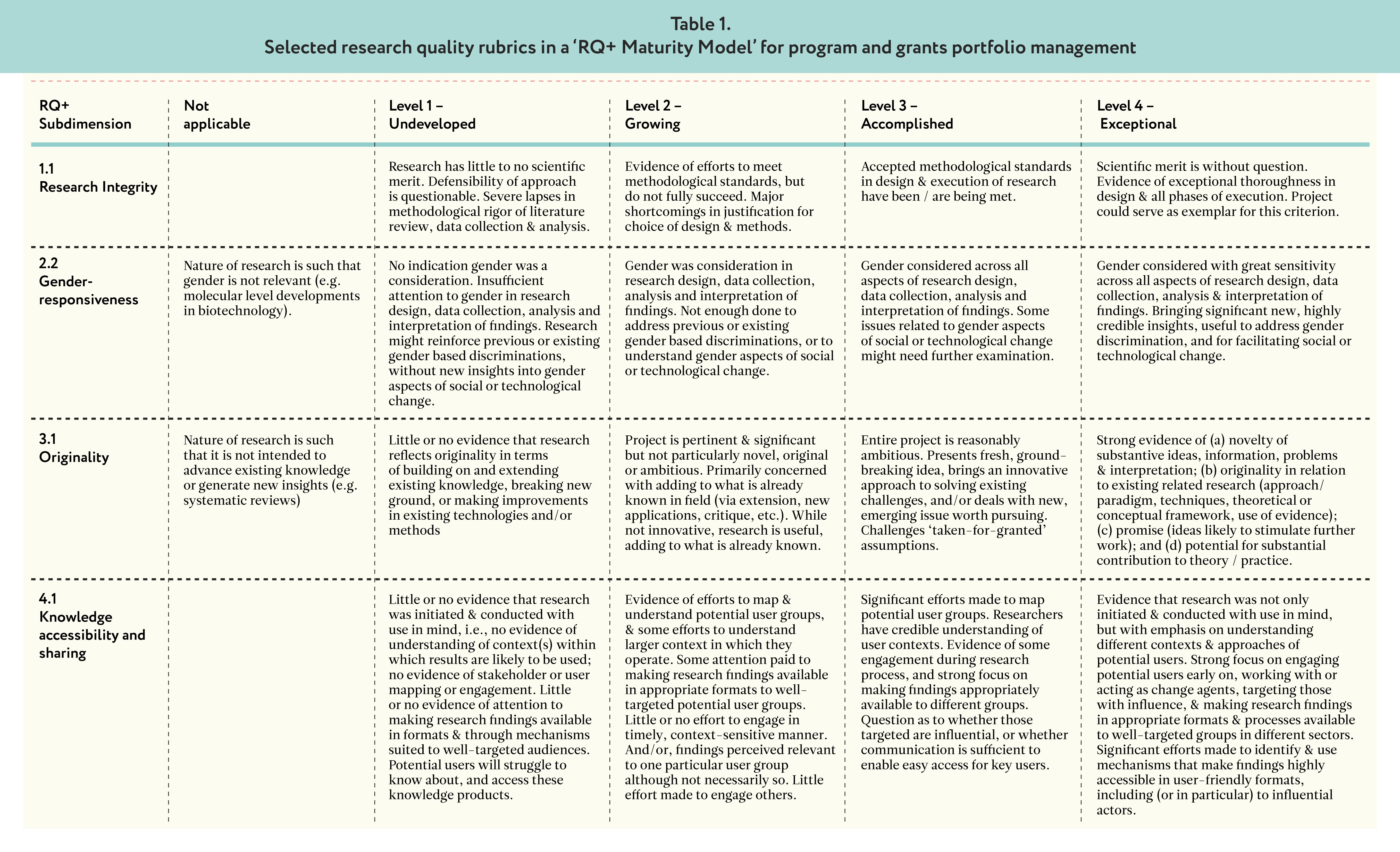

Fourth, it uses rubrics that enable the integration of qualitative and quantitative, factual and perceptual data. The rubrics form the basis of a rating system for the assessment of progress and performance towards high quality research. The rubrics and ratings can be tailored to the values, mandate and needs of a program, organization or collaborative effort. The rubrics make clear what values underlie the notions and rating of ‘quality research’, and improve the accuracy and comparability of the quality ratings.

Fifth, since multiple stakeholders are engaged, they enhance insights about the significance, merit, worth and/or value of the research, yet the final assessment remains within the realm of scientists.

Sixth, the framework can be applied at a specific point in time, or used for monitoring over a longer period as part of an adaptive management approach (as described below). It can also be used – where appropriate, and with the necessary nuance – to compare the performance of different research initiatives.

The RQ+ approach and adaptive management

Evaluation in the research domain should be a meaningful, empowering activity. It plays this role when it is used in adaptive management. Although its use for this purpose still has to be tested, we developed the RQ+ approach with this purpose in mind. We believe it will allow for well-paced data collection for monitoring and critical reflection that will empower those involved in research program or portfolio management and implementation, make formative and summative assessments much easier and quicker, and help steer research interventions or portfolios towards influence and impact – especially where research capacities are still under development, or where research contexts are particularly demanding.

First, managers, staff, grantees and other partners can cultivate a shared understanding of, and common approach to research quality if they focus on the quality dimensions and sub-dimensions during the conceptualization or management of research interventions or portfolios. The RQ+ approach enables them to identify and articulate the values and principles underlying their research and its management. It also supports target setting and the development of milestones for tracking progress.

Second, a focus on key influences and quality dimensions can alert research program or portfolio designers and proposal assessors to aspects that should be considered, help establish the risk profile of a particular intervention, and strengthen planning for quality. Rubrics can be tailored for this purpose.

Third, research teams can show that they are sufficiently context-sensitive when positioning their work for uptake. In other words, they can work towards, and demonstrate solid understanding of the domain(s) in which their research is likely to be used and build up useful tactics to position their research for use.

Fourth, the rating system of the RQ+ approach facilitates comparison and roll-up of quality assessments across projects, programs or portfolios.

Fifth, the mapping of research and grant portfolios according to research typologies, their risk profile or potential for practical application (for example, by using Pasteur’s Quadrant or McNie’s classification). When done early, such stratification enables more nuanced and meaningful accountability systems, where performance trajectories and progress towards expected performance levels can be tracked, justified and managed towards impact, for example by using a RQ+ ‘Maturity Model’. As demonstrated in the table below, a maturity model is a set of structured levels that describe how well the behaviors, practices and processes of an organization or partnership can reliably and sustainably produce required outcomes. It can therefore be used for benchmarking and tracking performance over time.

In conclusion, it is only through application and further adjustment and refinement that the potential of the RQ+ approach can be fully explored. Think-tanks are very well positioned to take on this challenge. If we do not experiment, we cannot advance our understanding or practices.

In conclusion, it is only through application and further adjustment and refinement that the potential of the RQ+ approach can be fully explored. Think-tanks are very well positioned to take on this challenge. If we do not experiment, we cannot advance our understanding or practices.

Previous

Previous